Claude’s core service depends on data processing. It interacts with the prompts you enter, the files you upload, and related metadata to generate responses. Like most AI systems, some user data is often collected and handled behind the scenes.

The specifics, like how this information is stored or who accesses it, are not always obvious to everyday users. The details are scattered across the privacy policy documentation, which we’ll break down in this guide.

We’ll give you key insights into the Claude AI privacy policy, covering:

- What data Claude collects

- Whether your chats are used to train AI models

- Whether your data is shared with third parties

Claude and Its Privacy Policy: An Overview

Claude is an AI chatbot accessible via web browser and iOS and Android apps. As a conversational assistant using natural language processing (NLP), Claude can support a wide range of tasks, including answering questions, drafting text, assisting with coding, and summarizing documents. It processes prompts, instructions, and uploaded files in real time to generate contextual responses.

Because these interactions may involve sensitive, proprietary, or personally identifiable information, users typically look into Claude’s privacy policy to understand whether their chats are used to train the AI model or if any third party can access that data.

Since Claude is built and maintained by an AI research company, Anthropic, it inherits its data practices. So, aspects like how user information is collected, stored, used, or occasionally shared are governed by the Anthropic Privacy Policy.

We’ll review the essential policy terms in the following sections so you can be more intentional about what you share when interacting with the service.

What the Claude AI Privacy Policy Says About Data Collection

Under Anthropic’s privacy policy, Claude may collect several categories of information to operate and maintain the service. This includes personal data, payment details, device information, and actual conversations, summarized below:

Data Category | What Claude May Collect |

Account data | Your name, email address, and phone number |

Payment information | Your payment details if you subscribe to paid Anthropic services like Claude Pro |

Your conversations |

|

Technical and device identifiers | Device type, OS, browser, IP address, mobile carrier or internet service provider (ISP), and connection details |

Usage information | Dates, times, and frequency of access, features used, pages viewed, error logs, and browsing history |

Cookies and trackers | Cookies, scripts, and similar tracking technologies used for operation, personalization, and analytics |

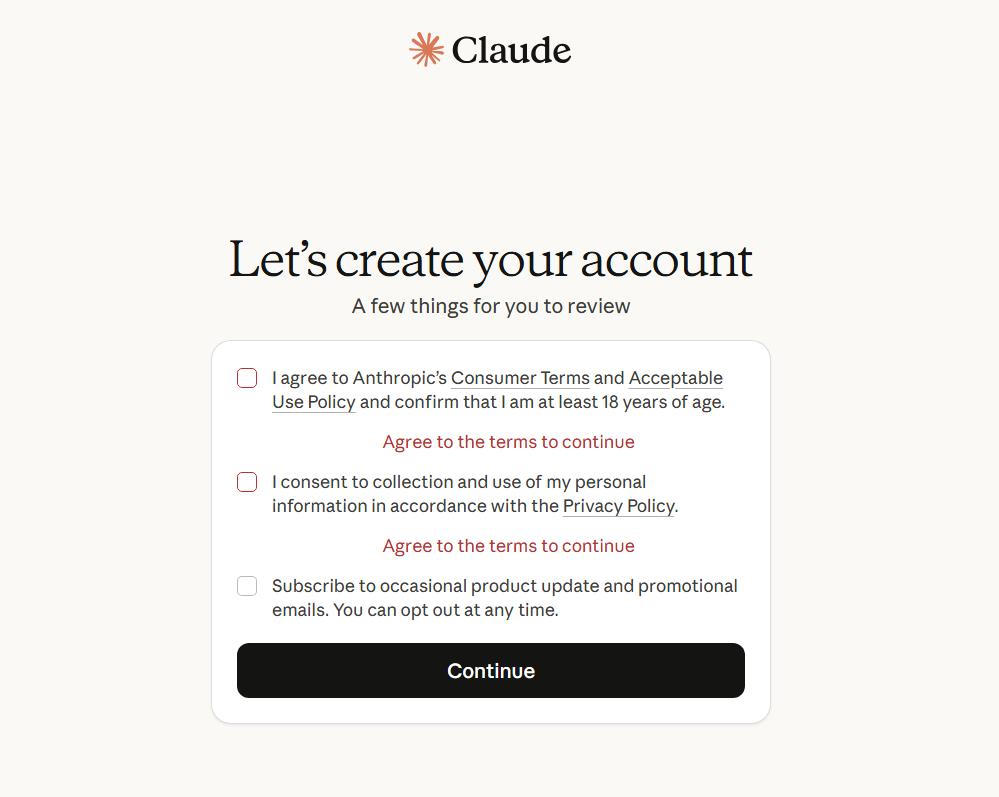

It’s worth noting that other conversational AI chatbots, such as Google Gemini and Perplexity AI, collect similar categories of information as part of delivering and maintaining their services. For Claude, you have to mandatorily provide consent for data collection during signup.

As an everyday user, remember that any information, including any personal details, processed in a Claude prompt may be a part of the data collected under Anthropic’s policy. When using Claude’s mobile app, Anthropic may also collect technical information depending on your device and network, such as your location, connection details, and device usage patterns.

How Does Claude Store and Use Your Data?

When you use Claude, the information you provide is stored and processed on Anthropic’s systems. The company may process data across multiple regions, with servers located in the United States, Europe, Asia, and Australia.

According to Claude AI’s data usage policy under Anthropic, the processed data primarily helps the company provide, maintain, and improve its services. Some common use cases include:

- Basic processing to generate responses

- Managing your account

- Handling subscription payments

- Sending you service-related messages

Anthropic takes several steps to keep your data secure, including encrypting it while in transit and at rest, as well as implementing robust security measures such as anti-malware protection, network segmentation, and multi-factor authentication.

Access to conversations is restricted through strict access controls. Designated Anthropic employees may access user conversations in limited scenarios like reviewing policy violations or when you explicitly consent to share data as part of feedback.

Does Clause Use Your Data To Train AI Models?

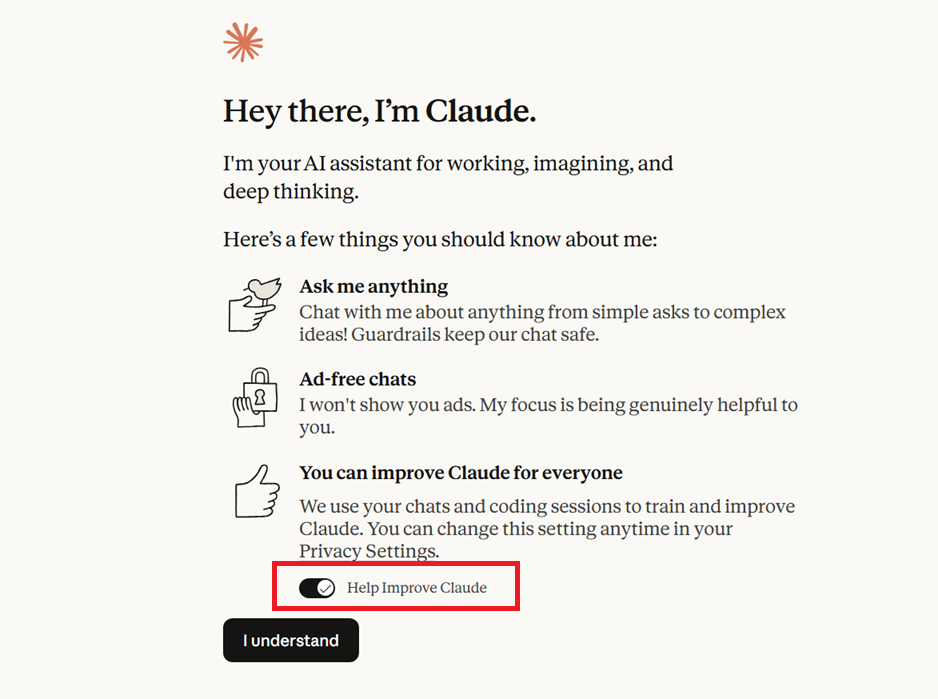

Yes, Claude may use the content of your chats to train its AI models, but only if you explicitly allow it. In a 2025 update to its Consumer Terms and Privacy Policy, Claude AI introduced new data training terms, now requiring every user to choose whether their conversations and coding sessions can be used to improve Anthropic’s AI models.

Existing users on consumer plans like Free, Pro, or Max got a notification to review their settings by October 8, 2025. For new users, the opt-in option is presented during signup.

You can revoke at any time by disabling the Help Improve Claude toggle in your Privacy Settings.

Your chats may also be used for model training if:

- Any conversation is flagged for safety review

- You voluntarily submit feedback (e.g., by using the thumbs up/down button)

- You join Claude’s Trusted Tester Program

Claude’s incognito mode can be helpful if you want to avoid sharing a particular conversation for model training. Incognito chats are excluded from training even if you enabled model improvement in your privacy settings.

For enterprise/commercial products like Claude for Work, Anthropic API, and Claude Gov, user data is not used for training by default unless the organization explicitly allows it.

Note: Claude’s models are trained on broader sources, including publicly available or licensed datasets from third parties that may contain your personal data. So, there’s a possibility your information may still end up in training datasets.

Does Claude Share Your Data With Third Parties?

Claude’s privacy policy clearly states that it doesn’t sell your personal data to outside companies for advertising or marketing purposes. However, Anthropic may share personal data with these third parties within the limits of the policy:

- Affiliates that are part of Anthropic’s corporate structure

- Service providers and business partners that help it run the service

- Parties involved in corporate transactions, like a merger or sale

- Law enforcement or government agencies, when legally required

Additionally, if you interact with third-party apps or websites through Claude (like following links to external services), those providers will collect data under their own privacy policy.

How Long Does Claude Retain Your Data?

Claude's data retention policy under Anthropic says that the company keeps your personal data for as long as necessary to operate the service.

However, there’s a specific retention timeline for your chats. This period varies depending on your account type and privacy settings. Here’s a snapshot:

Scenario | Standard Retention Window for Chats |

Consumer plans (Free, Pro, Max) with model training opted out | 30 days |

Consumer plans with model training opted in | Up to 5 years |

Incognito chats | 30 days |

Enterprise/commercial plans |

|

Under Anthropic Claude’s enterprise data retention policy, API customers from sensitive sectors like healthcare and finance can negotiate a Zero Data Retention (ZDR) agreement, in which chats are not stored on servers and are processed only for real-time safety checks.

Tips To Protect Your Privacy When Using Claude

Below are four practical steps you can take to reduce your data exposure without compromising Claude’s usability:

- Do not share sensitive details in Claude chats

- Adjust your Claude privacy settings

- Delete unnecessary chats

- Secure your mobile network

1. Do Not Share Sensitive Details in Claude Chats

Everything you type into Claude becomes part of the data it collects, so avoid sharing details like your home address, phone number, passwords, financial data, or medical records.

If you need assistance with real-world tasks, use anonymized or dummy data instead of actual details. For example, if you want to summarize a document containing conversation transcripts with five prospects, you can first replace their names with Prospect A, Prospect B, and so on, as well as remove other identifying details like employers or addresses.

2. Adjust Your Claude Privacy Settings

Claude offers several built-in controls to manage how your data is used. Revisit these settings periodically to ensure they still match your preferences after app or policy updates. The three key settings you should look into:

- Training controls: Turn off the Help Improve Claude toggle whenever you want to stop sharing data for AI model training.

- Incognito chat: Conversations in Incognito mode are not used for training and have a smaller retention window. To turn it on, start a new chat and click on the small ghost icon in the top right corner of the window.

- Connectors: Find all integrated accounts or services by going into the Connectors tab in your Account Settings. Remove any services from the list that you no longer use or want connected within Claude.

3. Delete Unnecessary Chats

If you opt in for model training, Claude retains your data for up to five years. You can delete older chats if you want to reduce the retention window. Deleted chats are removed from Anthropic’s back-end within 30 days, unless the data is already being used in training pipelines.

Here’s how to delete an existing chat:

- Open the conversation you want to remove

- Click the drop-down icon next to the chat title

- Select Delete and confirm your choice

4. Secure Your Mobile Network

If you frequently use Claude on mobile devices, your privacy exposure isn’t just limited to the app. Even with Claude’s built-in privacy controls, your interactions may still pass through your mobile network, which can collect its own metadata about how and when you access Claude and other app-based services.

Most major carriers today aren’t built for privacy. They collect vast amounts of data by default, often linking it to your real identity, billing details, and location history. This creates a parallel profile of your digital activity independent of what third-party apps like Claude collect.

Carrier infrastructure also has a long history of being prone to breaches that enable fraud, identity theft, and account takeover. For instance, T-Mobile alone suffered multiple large-scale breaches in recent years, exposing personal information of millions of its customers. AT&T disclosed a similar hacking incident that compromised the sensitive records of nearly all of its wireless subscribers.

To reduce exposure at the network level, switch to a private carrier service like Cape. We built a privacy-native infrastructure to limit the data collected at the source and offer multiple unique features that keep your mobile-linked identity safe.

Cape: The Carrier Built for Security and Privacy

Cape is a privacy-first mobile carrier designed to keep your communications safe from surveillance and misuse. Unlike traditional cell phone plan providers, our business model centers around providing you with premium and secure call, text, and data, rather than harvesting and selling your information.

Our service is built from the ground up with privacy and security at its core, offering unique features like:

Feature | Description |

Cape doesn’t ask for your name, address, or Social Security number. We collect only what’s required to provide service—and keep it for the shortest time possible. | |

Traditional carriers use a fixed International Mobile Subscriber ID (IMSI), making your device trackable. Cape automatically rotates your IMSI every 24 hours, which makes tracking a lot more harder. | |

We don’t ask for names or addresses. Payments are tokenized by Stripe and stored separately from your account, so financial details can’t be linked back to you. | |

Unencrypted SMS can expose OTPs and sensitive data. Cape encrypts and routes SMS/MMS through the app, so intercepted messages remain unreadable. Currently available on iPhone; Android coming soon. | |

Most U.S. carriers store your call and text metadata for years, sometimes indefinitely. Cape is built to forget, so call data records (CDRs) are deleted after just 24 hours. | |

Legacy protocols like SS7 enable tracking and interception. Cape verifies your device’s physical location before network attachment and automatically blocks suspicious connections. | |

Many services ask for your phone number, but sharing it exposes you to spam, scammers, data brokers, and a variety of other risks. VoIPs, on the other hand, don’t work with 2FA, cost extra, and aren’t encrypted. Cape gives you two free SMS/MMS lines that are end-to-end encrypted. | |

Cape nullifies the threat of SIM swapping by completely removing humans from the loop. During signup, you receive a 24-word phrase that generates a private key tied to your number. Only you, not even Cape, can move your number to a new device or carrier. | |

Traditional voicemail systems are outdated, unencrypted, and another security hole bad actors can exploit to gain access to your sensitive information. Cape encrypts all voicemails, ensuring only you can access them. | |

While roaming, your phone connects to local telecom providers to enable service that’s prone to interception. Cape provides you with peace of mind by routing your traffic through our U.S.-based mobile core to keep your identity and communications private. |

Ditch Legacy Carriers: Get Cape Today

Cape is a “Heavy” Mobile Virtual Network Operator (MVNO), meaning we own our mobile core and provision our own SIMs. This gives us full control over how accounts are authenticated and what data is collected (and for how long), and is how we are able to provide privacy and security features no other carrier on the market can offer.

Get started with Cape today and enjoy the peace of mind, knowing you are fully protected against scammers, hackers, bad actors, and other mobile threats.

To help protect more than just your phone, we’ve partnered with Proton. As a new Cape subscriber, you can choose between Proton Unlimited and Proton VPN Plus for just $1 for six months.